TipsForTeaming: Problem Framing and Prototyping: The 5-Day Shift That Drives Results in Agile Teams and AI-adoption

12/3/20256 min read

For years, I worked in corporate environments using scaled Agile practices. We followed the SAFe® framework, ran two-day PI Planning events, built backlogs, defined OKRs, ran maturity assessments, and delivered in sprints. Agile helped us move faster, adapt quicker, and coordinate better across teams.

We spent a lot of time talking to users, gathering input, and learning what might be possible. The people involved were experienced, thoughtful, and committed to delivering something valuable. But despite all of that, we weren’t always effective in generating great outcomes.

The problem wasn’t a lack of effort or skills, it was a lack of the right structure. The way we framed problems, ran discovery, and validated direction wasn’t sharp enough. It left space for interpretation, for assumptions, and for misalignment to quietly grow.

We often started with a business goal or a feature idea. We built solutions around that idea. We scoped epics and user stories, sometimes layered with input from research teams or user conversations. But when you looked closely, much of that pre-work was built on assumption, and on words.

We would describe the solution, talk about the user, outline what we thought they needed. And users, in turn, were asked to imagine what it might be like. Imagine how it would help. Imagine how they’d interact with something that didn’t yet exist.

The people doing this work were smart, experienced, and committed to creating impact. But the way we made decisions, and the conversations we had, weren’t always the best way to build clarity. We were preparing for delivery without deeply validating the problem. And that meant, too often, the real feedback came late, after the work was done.

When the Planning Is Precise but the Problem Is Not

Agile thrives on iteration. But iteration only helps if you’re starting from something real.

The uncomfortable truth is that many teams, even the high-performing ones, operate with a kind of silent doubt: Are we solving the right thing? Do we know what users really need?

But the system is built to move, so we move. The backlog grows. Velocity becomes the metric. And weeks, sometimes months, are spent delivering something that’s only partly aligned.

That’s not a failure of people. It’s a gap in process.

What I Wish We’d Had

In the past years I trained in Problem Framing and Design Sprint methods, especially as they apply to complex environments like AI adoption. And I kept thinking: This is what was missing in the SAFe(R) organisations I was part of. Not instead of Agile. Before it.

Problem Framing is a way to pause and clarify the challenge in an informed way:

What are we really trying to solve?

Why does it matter?

What assumptions are shaping our thinking?

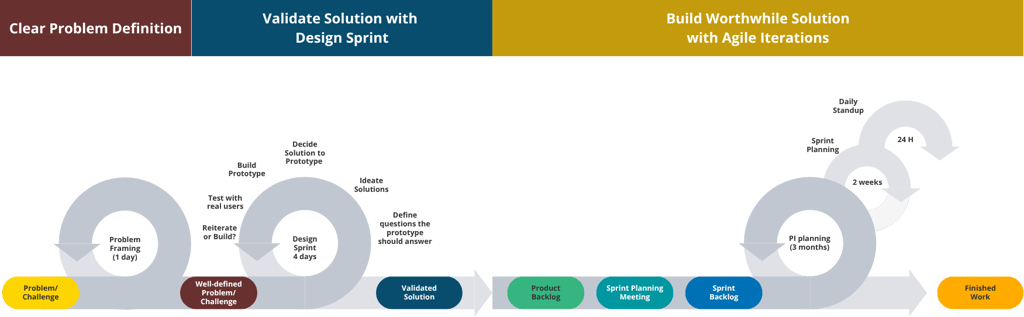

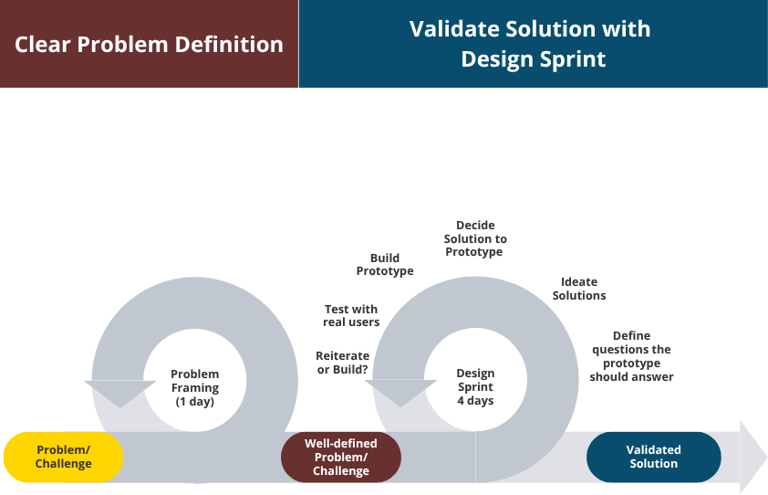

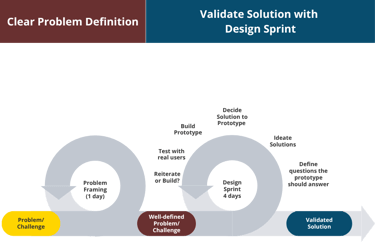

And then, the Design Sprint (not related to Agile sprints. Don´t let the name fool you), becomes the structure that moves us from aligned problem to tested solution, fast.

It brings together a small, focused team, not to talk, but to do. Over just a few days, they generate ideas, choose one, build a prototype, and test it with real users. Not just internal feedback. Not simulated engagement. Actual users. Actual insight.

And now, thanks to AI, much of this can be done in just 3 to 4 days.

From Idea to User Feedback in 4 days

Here’s how the flow changes when you put clear problem framing and solution validation before planning.

In most traditional Agile cycles, planning begins with a solution already in mind. Even in some cases teams jump to build a prototype in a few days or weeks. But Design Sprints introduce a step, a purposeful moment to align on the problem, define the key questions that need answering to know we are in the right track, ideate solutions that will answer those questions, decide the solution to prototype, prototype, test with real users, and refine, before the roadmap is set and the backlog is filled.

That’s where the magic happens. Because the alternative is planning features that sound good but don’t solve what matters. With a Design Sprint, the team leaves with:

Shared understanding

A tested prototype

Feedback from real users

And a level of confidence that saves time later

It doesn’t just help you move fast. It helps you move forward, with clarity

The Power of a Temporary Team with a Clear Purpose

Problem Framing & Design Sprints aren’t just about tools or rituals. They are a masterclass in teaming.

Maximum 7-8 experts in different functions (e.g. marketing, sales, product, software development, AI/data engineering, legal). Four days. One challenge, one problem to solve. And a clear rhythm for thinking, deciding, creating, and learning together.

Everything is intentional, from roles, to time-boxes, to what happens in each session. And because it’s time-limited, there’s no space for spinning. There’s just space for insight.

This is what most long-term teams spend months developing:

Psychological safety

Clear decision-making

A shared language of action

The ability to collaborate under pressure

But in a Design Sprint, that environment is designed from the start.

And that’s the lesson: Effective teaming doesn’t require history. It requires structure.

Why It Matters, Especially Now with (gen)AI hype

Organisations everywhere are under pressure to innovate faster — especially with AI. But urgency isn’t the same as clarity. What we’re seeing now isn’t a failure of potential — it’s a failure of translation: from ambition to execution, from demo to deployment, from pitch deck to product.

And the statistics are hard to ignore:

80% of AI projects fail (RAND, 2024)

Only 48% of AI models reach production (Gartner, 2024)

Only 26% of companies scale beyond PoC (BCG, 2024)

42% of companies report abandoning most of their AI initiatives (S&P Global, 2025)

It’s not that teams aren’t skilled. Or committed. Or resourced. The issue is deeper, and more human.

Here are four consistent reasons for failure, all of which are preventable with better framing, structure, and teamwork:

1. Unclear goals and weak links to business value

AI initiatives often begin without a clear connection to outcomes that matter. Organisations chase innovation for its own sake (“we should do something with AI”) rather than anchoring it to value (“we’re using AI to solve X for Y”).

→ BCG (2024) and S&P Global (2025) rank this as a top failure point for scale and adoption.

2. Poor use-case alignment

Too many initiatives are built around what’s technically possible, not what’s strategically needed. And the result is shallow impact or beautiful solutions to the wrong problems.

→ Gartner (2024) found that use-cases often reflect internal pressure rather than user or business priority.

3. Lack of user validation

You can’t generate adoption through explanation alone. Yet most AI products rely on imagined workflows or generic use-cases, without ever putting the prototype in the hands of real users.

→ McKinsey (2025) reports that the organisations seeing the strongest financial returns from AI are those that redesign and validate real human-AI workflows, rather than stopping at a working prototype.

4. Misunderstood or poorly framed problems

Speed is a strength, but only when it’s pointed in the right direction. Without clearly framing the problem, teams risk building brilliant answers to irrelevant questions.

→ RAND (2024) and EPAM (2025) trace high failure rates to premature solutioning and shallow problem understanding.

This is where Problem Framing and Design Sprints create the shift. They don’t slow the team down, they make sure you’re working in the right direction. Before the backlog is built. Before the roadmap is set.

It’s not about perfection. It’s about precision, in what you’re solving, who you’re solving it for, and how you know it works.

In a world chasing faster delivery, the real edge isn’t in the tech. It’s in the thinking that comes before the tech.

Final Thought

You don’t need months of meetings to know whether your idea will land.

You don’t need three planning decks and a room full of sticky notes to align.

Sometimes, what you need is four days. The right people. A clear structure. And a willingness to learn before you commit.

Design Sprints offer that.

And for teams who want to build the right thing, not just build quickly, they might just be the best four days you’ll ever spend.

References & Sources

RAND Corporation (2023–2024) https://www.rand.org/pubs/research_reports/RRA2680-1.html

Gartner via Forbes (2024) https://www.forbes.com/councils/forbestechcouncil/2024/11/15/why-85-of-your-ai-models-may-fail/

Boston Consulting Group (BCG) (2024) https://www.bcg.com/press/24october2024-ai-adoption-in-2024-74-of-companies-struggle-to-achieve-and-scale-value

https://media-publications.bcg.com/BCG-Wheres-the-Value-in-AI.pdf

CIO Dive (2025) https://www.ciodive.com/news/AI-project-fail-data-SPGlobal/742590/

EPAM Systems (2025) https://www.epam.com/about/newsroom/press-releases/2025/what-is-holding-up-ai-adoption-for-businesses-new-epam-study-reveals-key-findings

NTT Data (2024) – https://www.nttdata.com/global/en/insights/focus/2024/between-70-85p-of-genai-deployment-efforts-are-failing

McKinsey (2025) - https://www.mckinsey.com/~/media/mckinsey/business%20functions/quantumblack/our%20insights/the%20state%20of%20ai/2025/the-state-of-ai-how-organizations-are-rewiring-to-capture-value_final.pdf

SP&Global (2025) https://www.spglobal.com/market-intelligence/en/news-insights/research/ai-experiences-rapid-adoption-but-with-mixed-outcomes-highlights-from-vote-ai-machine-learning

Greater impact, better results, healthier teams in tech-driven organisations

amaia@amaialesta.com

© 2026. All rights reserved.