Tips for Teaming with AI: The Organisational Blind Spot in AI Adoption

A reflection on AI adoption, and the risks of overlooking to input organisational identity data.

2/9/20265 min read

Over the past months, I have been spending a lot of time learning, training, and working with organisations that want to bring AI into their way of working to generate better outcomes. Innovation, AI adoption, and how teams make this transition well has been a big focus of my work and learning recently.

One theme keeps coming up again and again: data.

There is a lot of discussion about data in AI adoption. Data quality. Data bias. Not enough data. Too much data. Structured versus unstructured data. All of this matters. I am fortunate to be part of several strong networks of business consultants, coaches, facilitators, and women working in AI. In these spaces, there is thoughtful and necessary attention on privacy, inclusion, equity, bias, and ethics. These conversations are essential and are shaping more responsible approaches to AI adoption.

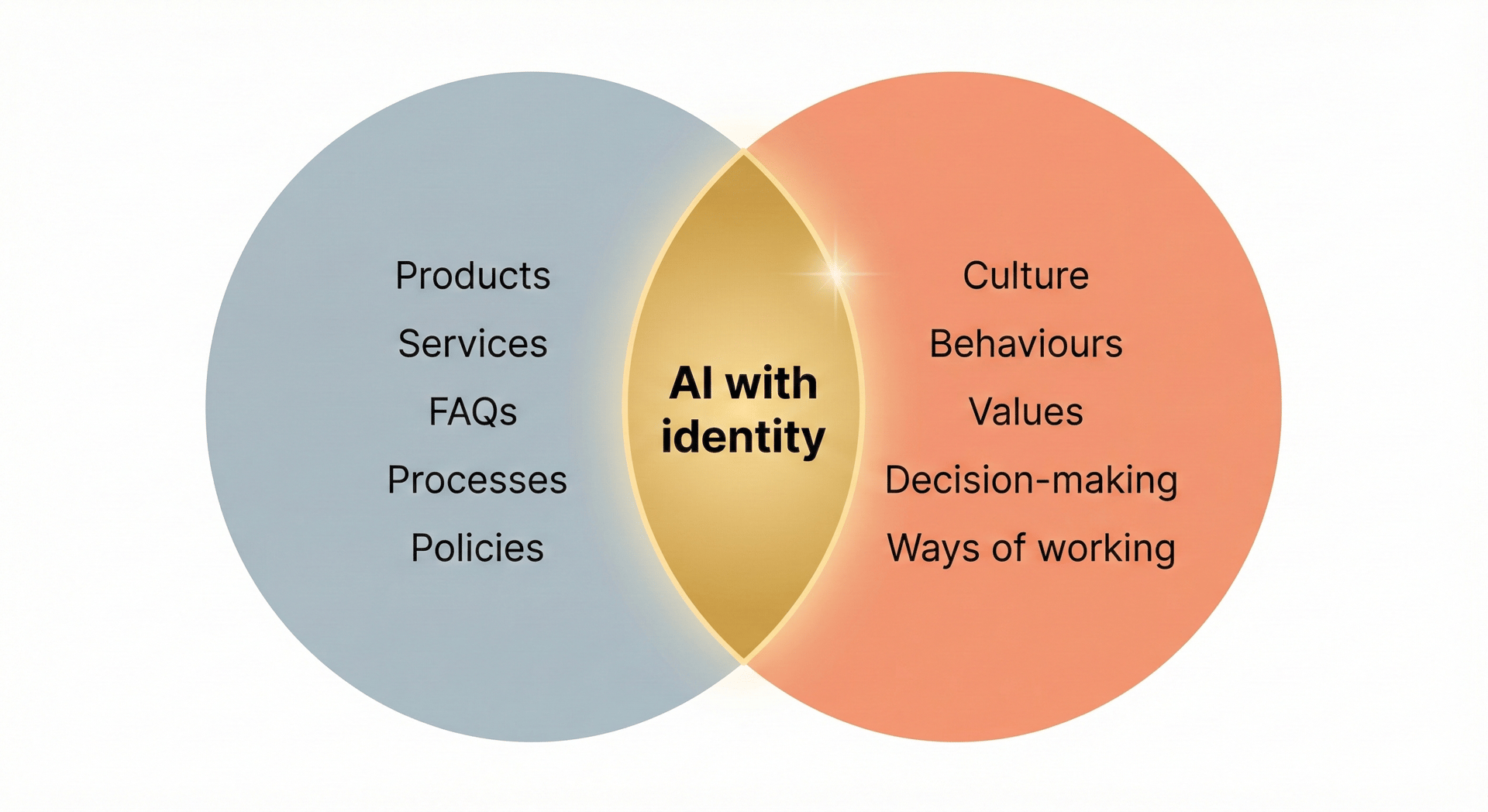

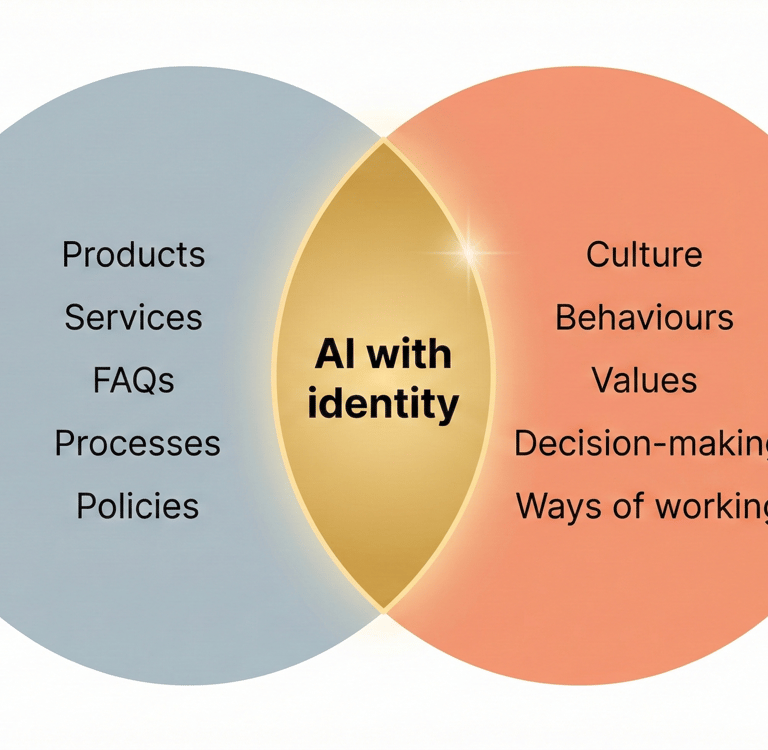

At the same time, many organisations invest significant time preparing what I will call hard data: product information, service descriptions, FAQs, pricing, processes, policies, and operating hours. This is the equivalent of hard skills in people. Necessary, visible, measurable, and relatively easy to document. AI tools need this information to function correctly.

And yet, something essential is often missing.

If the ambition is for AI to support an organisation in a way that feels distinct, aligned, and genuinely helpful, then AI needs access to another category of data altogether. Data about who you are as an organisation. Your culture. Your behaviours. Your values. How decisions are made. How trade-offs are handled. How people show up under pressure. How customers are treated when things do not go to plan. Your history.

This is the soft data of an organisation. The equivalent of soft skills in people.

This is not a soft concern. It is a strategic one.

What Most Organisations Feed Their AI

When leaders begin exploring AI, the focus naturally goes to what is visible and tangible. The hard data. What the organisation does, sells, delivers, and promises. From a technical perspective, this makes sense. AI systems need reliable, structured information to respond accurately.

From a differentiation perspective, it is incomplete.

Many organisations operate in similar markets, with comparable offerings and overlapping capabilities. If AI is trained only on hard data, the outputs will naturally converge. Similar inputs lead to similar results. This is why so many chatbots sound the same. This is why AI-generated content often feels generic. This is why customer interactions powered by AI struggle to feel human or distinctive.

It is not because the technology is weak. It is because the dataset is incomplete.

What Is Missing: Soft Data as Organisational Identity

One insight that became particularly clear to me during recent training for business AI consultants is that AI does not only learn from what you explicitly provide. It also learns from patterns of interaction. From how questions are framed. From what is prioritised. From what is ignored. From what is rewarded. From what is corrected.

Culture is data.

Behaviours are data.

Values are data.

Decision-making patterns are data.

Organisational history is data.

If these elements are not made explicit, AI will still form a model. It will infer who you are from signals, prompts, feedback, constraints, and usage patterns. That inferred model may or may not represent you in the way you intend.

If AI is used to speak on your behalf, whether in customer support, marketing, content creation, or internal assistance, then AI is already representing your organisation. The real question is whether it represents you accurately, consistently, and intentionally.

Why This Matters More Than It First Appears

Let us take a concrete example.

Imagine the automotive industry. On the surface, all car manufacturers produce cars. They all have engines or batteries, wheels, safety standards, colours, pricing tiers, and customer support processes. If an AI system were trained only on product specifications, policies, and FAQs, an AI representing one manufacturer could sound remarkably similar to another.

And yet, we instinctively know that BMW, Audi, Tesla, and Volvo stand for different things. They make different choices. They prioritise different experiences. They communicate differently. They attract different customers and different employees.

That difference is not in the hard data. It is in the soft data. In the culture. In the values. In the decision rules. In the history of how the organisation has chosen to act over time.

When AI is trained only on what an organisation does, it produces sameness. When AI is informed by who the organisation is, it can support differentiation.

This is why two organisations with similar products can feel entirely different to interact with, and why AI adoption that ignores organisational identity often leads to outputs that feel “not us”, even when they are technically correct.

Why This Matters for Leaders Starting with AI

For founders and leaders who are not deeply technical, AI can feel overwhelming. The conversation quickly shifts to tools, vendors, architectures, and capabilities. It is easy to believe that AI adoption is primarily a technical challenge.

In practice, it is a clarity challenge.

If an organisation is unclear about who it is, how it wants to show up, and what behaviours it values, AI will amplify that lack of clarity. The technology will work, yet the outcomes will feel misaligned. Teams will sense that something is off. Customers may struggle to connect. Leaders may find themselves correcting outputs rather than trusting them.

AI is a tool to achieve a purpose. When the purpose is unclear, or when organisational identity is implicit rather than explicit, AI does not resolve the problem. It magnifies it.

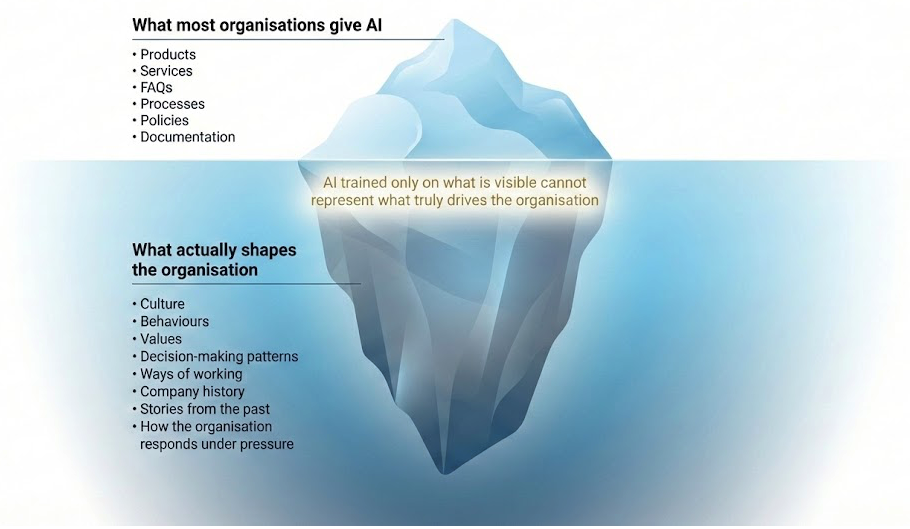

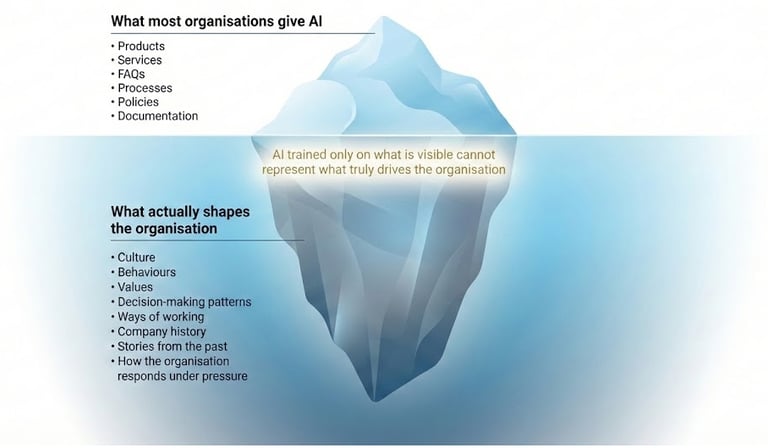

A Simple Visual

Imagine an iceberg.

Above the waterline sit the hard data: product information, services, processes, policies, documentation. This is what most organisations focus on when preparing for AI adoption.

Below the waterline sit the soft data: values, behaviours, decision rules, communication style, cultural norms, and organisational history. This is what shapes how the organisation actually operates day to day.

AI trained only on what is visible above the surface will never fully represent what drives the organisation below it.

The Leadership Question This Raises

If an organisation wants AI to help create better outcomes, not just faster ones, then clarity becomes a leadership responsibility.

AI will reflect back what it is given and what it observes. It will either reinforce sameness or support meaningful differentiation. That depends less on the model and more on organisational self-awareness.

Before asking what AI can do for you, a more useful question is this:

How clear are we about who we are, and how we want to show up, when technology acts on our behalf?

In the next post in this series, I will explore how AI already experiences leadership style and teaming dynamics through everyday interactions, and why this matters more than most leaders realise.

Note: Images generated by me in Gemini.

Greater impact, better results, healthier teams in tech-driven organisations

amaia@amaialesta.com

© 2026. All rights reserved.