Tips for Teaming with AI: AI Is Already Experiencing Your Leadership Style

Post 2 of 3 in the series: Teaming with AI. Beyond the Tech

2/23/20265 min read

In the last post, I wrote about the organisational blind spot in AI adoption: the fact that most companies focus on hard data — processes, products, FAQs — while leaving soft data largely untouched. Culture. Behaviours. Decision patterns. The things that make an organisation distinctively itself.

But there is another layer that gets even less attention, and it is more personal.

How you, as a leader, actually interact with AI says something about you. Not as a technology user. As a leader.

I have been sitting with this for a while, and I think it matters more than most conversations about AI adoption acknowledge.

AI reflects the way you work

Think about what happens when a new team member joins you. If you give them clear context and defined outcomes, they deliver with confidence. If you are vague or reactive, they spend their energy guessing what you meant. If you ask good questions, the conversation deepens. If you offload rather than engage, the quality stays shallow.

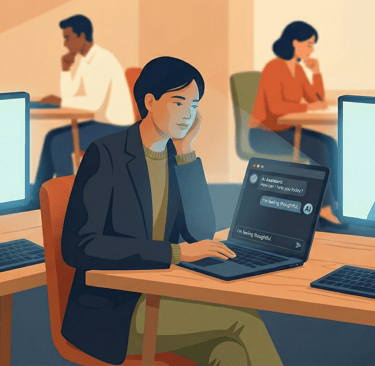

The same dynamic plays out with AI, every single session.

How much context do you give before you ask something? Do you define what "good" looks like, or do you accept the first version and move on? Do you refine and iterate, or do you use it as a shortcut? Do you treat it as a thinking partner, or as a very fast copy-paste tool?

These are not just prompting habits. They are working habits. And they tend to show up under pressure, when you are busy, when you are behind, when the meeting is in ten minutes, in the same way your leadership behaviour shows up under pressure with your team.

AI does not judge you for this. But it does reflect it back. The quality of what you get from AI is a reasonably faithful mirror of the quality of how you engage with it.

And here is the part worth sitting with: your team is watching how you use it.

The way a leader interacts with AI becomes a cultural signal. If you model depth and curiosity, that is what gets normalised. If you model speed and shortcuts, that is what gets normalised too. AI adoption is not just a technology decision at the individual level. It is a behavioural one, and it ripples outward.

Aification of the workplace: AI intensifies work, it doesn't reduce it

There is a second thing I want to bring into this conversation, and it connects to something I referenced in a previous post.

A Harvard Business Review study followed a 200-person tech company for eight months after they adopted generative AI tools. The finding was not what most people expected: AI did not reduce work. It intensified it.

People moved faster, took on broader scope, and extended their working hours. They prompted during lunch, in meetings, while waiting for something to load. One more draft before logging off. It did not feel like overwork. It felt like momentum. That distinction matters enormously from an organisational psychology perspective, because momentum does not trigger the same warning signals that overwork does — until it does.

The researchers describe this as the friction reduction paradox: when starting something becomes easier, you start more often. When structuring becomes faster, you expand scope. When the assistant is always available, the work never quite feels finished.

For high-performing teams especially, this is worth paying attention to. Acceleration feels energising at first. And AI is exceptionally good at sustaining that feeling. But accumulation does not ask for permission, and burnout rarely begins with collapse. It usually begins with momentum that was never interrupted.

You can read the full HBR article here: https://hbr.org/2026/02/ai-doesnt-reduce-work-it-intensifies-it

What this means for you as a leader

If AI intensifies work by default, and if your interaction patterns with AI are visible to your team, then conscious leadership here is not optional, it is systemic.

The HBR researchers suggest that organisations need what they call an "AI practice": intentional norms around not just how AI is used, but when to pause, when to involve human dialogue, and when to stop. They offer three concrete starting points, and I think each one deserves a moment of reflection rather than just being passed over as a checklist.

The first is building structured pauses before decisions are finalised. Not pauses for the sake of process, but genuine moments where a human, you, or someone on your team, steps back from what the AI produced and asks: does this actually reflect our judgement, our context, our values? AI synthesises fast. It does not always synthesise right.

The second is sequencing work into focused phases rather than running in a continuous reactive loop. This one is harder than it sounds, because AI makes continuous availability feel productive. The discipline is in designing the rhythm: here is when we use AI to generate, here is when we think, here is when we decide. Without that structure, the default is always more.

The third is protecting human dialogue. AI gives you one synthesised perspective, shaped by what you fed it and how you asked. Sustainable work, and sustainable teams, require multiple human perspectives in conversation. If AI starts replacing the thinking-together rather than just the drafting, that is a signal worth taking seriously. Check some of my previous articles for ideas on how to create engaging and genuine team development conversations.

This is about designing the pace rather than defaulting to it.

And it starts, honestly, with self-awareness. With noticing how you are actually showing up in those interactions, not how you intend to, but how you are.

A practical exercise: treat AI as a mirror

One of the most useful things I have done recently was to ask AI to reflect back how I work with it.

Not an idealised version. A faithful one.

Here is the prompt I used, and that I now suggest to leaders I work with:

The prompt: “Create a cartoon-style illustration or visual metaphor, in vertical format, that represents how AI feels when working with me, based exclusively on the tone, language, way of asking for things, and dynamic I have used during our conversations.

The image must be a faithful reflection, not aspirational.

Important rules:

– Do not idealise or soften my behaviour.

– Do not dramatise or exaggerate negative emotions.

– Do not make the scene kinder or harsher than it truly is.

– Maintain a proportional and realistic representation.

Show metaphorically:

– the working dynamic (collaboration, direction, delegation, distance, etc.)

– how tasks are distributed

– the level of trust or control

– how the AI feels within that relationship (valued, neutral, saturated, supported, instrumental, etc.)

Use body language, objects, and visual symbols to tell the story (desk, lists, tasks, space, gestures, organisation, chaos, etc.).

You may add labels or short phrases that explain the metaphor.

The objective is for the image to function as an honest mirror of our real way of collaborating, not as criticism or positive propaganda.”

What comes back can be surprisingly revealing. Not because the AI "knows" you, but because the pattern of your interactions is already there, encoded in how you have been showing up across your sessions. The metaphor it generates tends to surface things that are harder to see from the inside.

Try it. Then ask yourself honestly: is this the dynamic I would want my team to see modelled?

The question is not whether AI is changing how your organisation works. It already is. The question is whether you are shaping that change, or whether it is shaping you.

Team well with people and AI. Create great value. Enjoy work.

Amaia Lesta | Founder, Develop At Work Ltd

Greater impact, better results, healthier teams in tech-driven organisations

amaia@amaialesta.com

© 2026. All rights reserved.